Quantum Computing Quantum Volume Explained: A Definitive Guide to Performance Benchmarking

Welcome to the complex yet fascinating world of quantum computing, where the true power of a machine isn't simply measured by its qubit count. As the field rapidly advances, understanding how to accurately assess the capabilities of quantum hardware becomes paramount. This comprehensive guide delves deep into Quantum Volume, a crucial metric introduced by IBM, designed to provide a holistic measure of a quantum computer's performance. Discover why this single number has become an indispensable tool for benchmarking progress, evaluating machine quality, and truly understanding the potential of today's quantum systems. Prepare to unravel the intricate factors that contribute to a high Quantum Volume and why it’s more than just a buzzword – it’s a critical indicator for the future of quantum computation.

What is Quantum Volume? Deconstructing the Core Concept

In the nascent stages of quantum computing, simply stating the number of qubits a machine possesses offered a rudimentary gauge of its potential. However, as quantum computers transitioned from theoretical constructs to tangible, albeit noisy, devices, the need for a more nuanced and comprehensive metric became glaringly apparent. Enter Quantum Volume (QV), a single-number metric that encapsulates not just the quantity of qubits but also their quality, connectivity, and the accuracy of the operations performed on them. It’s a holistic benchmark that offers a more realistic assessment of a quantum computer's ability to execute complex quantum algorithms.

Why is this necessary? Imagine comparing two classical computers. One might have more RAM, but if its processor is slow, or its storage is bottlenecked, the raw RAM count alone doesn't tell you its true performance. Similarly, in quantum computing, a machine with 50 qubits might be less powerful than one with 20 qubits if the qubit quality of the 20-qubit system is significantly higher, boasting lower error rates and longer coherence times. Quantum Volume addresses this by integrating multiple performance parameters into a single, understandable score.

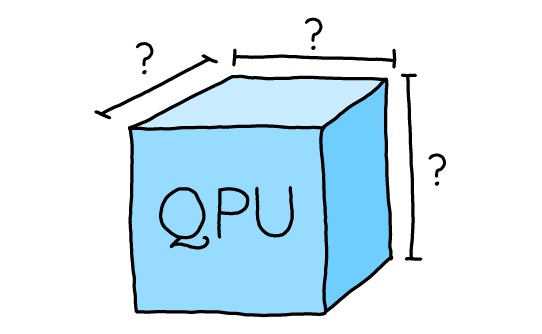

At its core, Quantum Volume aims to quantify the largest random circuit of a certain depth that a quantum computer can successfully execute. The "volume" aspect refers to the conceptual cube of quantum operations that the machine can handle – a square circuit where both the number of qubits (width) and the circuit depth (length) are equal. A higher Quantum Volume indicates a more powerful and reliable quantum computer, capable of running more complex and intricate quantum algorithms before decoherence and errors render the results meaningless.

The Factors That Influence Quantum Volume

Achieving a high Quantum Volume is not a simple feat; it requires a delicate balance and optimization of several critical hardware and software characteristics. Each factor plays a pivotal role in determining a quantum computer's overall performance and, consequently, its Quantum Volume score.

Number of Qubits (n)

While Quantum Volume moves beyond a mere qubit count, the number of available qubits remains a foundational component. More qubits theoretically allow for larger quantum circuits and more complex computations. However, it's crucial to understand that simply adding more qubits without maintaining their quality can actually decrease the Quantum Volume, as additional qubits introduce more potential for errors and crosstalk.

Qubit Connectivity (C)

The architecture of a quantum computer dictates how easily qubits can interact with each other. High qubit connectivity means that any qubit can interact with many other qubits, reducing the need for costly "swap" operations that move quantum states between non-connected qubits. These swap operations introduce additional gates and potential errors, thus limiting circuit depth and overall performance. A well-connected qubit topology is vital for executing a wide range of quantum algorithms efficiently.

Gate Fidelity (F)

Perhaps one of the most critical factors, gate fidelity refers to the accuracy with which quantum operations (gates) are performed. Every time a quantum gate is applied, there's a small probability of error. These gate errors accumulate rapidly in complex circuits. High-fidelity gates ensure that the intended quantum state is maintained throughout the computation. Improving gate fidelity is a primary focus for quantum hardware engineers, as even marginal improvements can significantly boost Quantum Volume and the reliability of quantum computations.

Coherence Time (T)

Qubits are fragile. They maintain their quantum properties (superposition and entanglement) only for a limited duration, known as their coherence time. Environmental noise, temperature fluctuations, and interactions with the surroundings cause decoherence, leading to the loss of quantum information. Longer coherence times mean that qubits can maintain their delicate states for longer, allowing for deeper quantum circuits to be executed before the information degrades. This is especially critical for running algorithms that require many sequential operations.

Compiler Efficiency (E)

Beyond the physical hardware, the software layer plays a crucial role. A quantum compiler translates high-level quantum algorithms into the specific gate operations that a particular quantum computer can execute. An efficient compiler can optimize circuits by:

- Reducing gate count: Minimizing the total number of operations required.

- Optimizing gate placement: Arranging gates to minimize errors and leverage qubit connectivity.

- Mapping logical qubits to physical qubits: Intelligently assigning abstract qubits to the best-performing physical qubits on the chip.

How Quantum Volume is Measured: The Benchmarking Process

The Quantum Volume metric isn't just a theoretical concept; it's derived from a specific, rigorous benchmarking protocol. Understanding this process is key to appreciating what the Quantum Volume score truly represents.

The IBM Quantum Volume Protocol

The most widely adopted method for measuring Quantum Volume was pioneered by IBM. The protocol involves executing a series of specially designed, randomized quantum circuits. Here's a simplified breakdown:

- Square Circuits: The protocol constructs "square" circuits, meaning the number of qubits (width) used in the circuit is equal to its depth (number of layers of operations). This ensures that both factors – qubit count and circuit depth – are tested simultaneously.

- Randomized Two-Qubit Gates: Within each layer, random permutations of two-qubit gates are applied. This randomness ensures that the benchmark isn't biased towards specific hardware optimizations or a particular class of algorithms. It forces the machine to demonstrate general-purpose capability.

- Success Probability Threshold: For a given circuit size (number of qubits, n), multiple runs are performed. The Quantum Volume is the largest 'n' for which the quantum computer can successfully execute the random circuits with a probability of at least 2/3 (or another defined threshold) of the ideal output. This success rate indicates the machine's ability to maintain coherence and perform operations accurately.

- Exponential Growth: The Quantum Volume grows exponentially. A Quantum Volume of $2^n$ means the machine can reliably execute a random circuit on 'n' qubits with a depth of 'n'. For example, a Quantum Volume of 32 means the machine can run a 5x5 circuit (approximately $2^5 = 32$). This exponential scaling highlights the difficulty of achieving higher QV scores and the significant breakthroughs they represent.

Practical advice for evaluating Quantum Volume reports: Always look for the specific threshold used (e.g., 2/3 success probability) and the methodology. A Quantum Volume score is only meaningful if the underlying test protocol is robust and transparent.

Interpreting the Quantum Volume Score

A higher Quantum Volume score directly implies a more capable quantum computer. It signifies that the machine can:

- Handle More Qubits Reliably: Not just possessing them, but effectively using them without excessive errors.

- Execute Deeper Circuits: Maintain coherence and accuracy over a larger number of sequential operations.

- Perform More Complex Algorithms: The ability to run deeper and wider circuits opens the door to tackling more intricate problems that require significant quantum resources.

Quantum Volume in the NISQ Era and Beyond

Quantum Volume holds particular significance in the current phase of quantum computing, often referred to as the NISQ (Noisy Intermediate-Scale Quantum) era. This is a period characterized by quantum computers that have a modest number of qubits (typically 50-100+) but are inherently noisy and prone to errors, lacking full fault-tolerant quantum computing capabilities.

The Significance for Current Quantum Computers (NISQ)

In the NISQ era, Quantum Volume serves as a crucial benchmark because it directly addresses the limitations imposed by noise and limited coherence. It's not enough to have many noisy qubits; they must be high-quality enough to run a meaningful computation. QV helps distinguish truly performant machines from those with merely large qubit counts. For researchers and developers working on early quantum applications, understanding a machine's Quantum Volume is essential for designing circuits that can realistically be executed with a reasonable expectation of success.

For instance, a quantum algorithm designed to run on 10 ideal qubits might require a Quantum Volume of 1024 (2^10). If the available hardware only has a QV of 64 (2^6), significant modifications, simplifications, or error mitigation techniques would be necessary to run the algorithm, or it might simply be beyond the current machine's capabilities. This highlights QV's role as a realistic gauge of what's achievable today.

Limitations and Criticisms of Quantum Volume

While invaluable, Quantum Volume is not without its critics and limitations. It's important to understand these nuances to avoid misinterpreting its significance:

- Not Universal for All Tasks: Quantum Volume is based on random circuits. While this provides a general measure of performance, it doesn't necessarily reflect a machine's efficiency or suitability for all types of quantum algorithms. An algorithm with highly specific connectivity requirements, for example, might perform poorly on a machine with high QV but a sub-optimal qubit layout for that specific algorithm.

- Doesn't Capture All Aspects: QV doesn't explicitly measure aspects like the speed of gate operations (clock speed of the quantum processor), the latency of control systems, or the ease of programming. These factors can also significantly impact the practical utility of a quantum computer.

- Focus on Square Circuits: The "square" nature of the benchmark (depth equals width) means it may not fully capture performance for very wide but shallow circuits, or very deep but narrow circuits.

- Hardware-Specific Optimizations: Some argue that hardware providers might optimize their systems specifically for the QV benchmark, potentially skewing results without genuinely improving general-purpose utility.

Due to these limitations, other benchmarks are emerging, such as CLOPS (Circuit Layer Operations Per Second) by Google, which focuses on the rate at which a quantum processor can execute circuit layers, or application-specific benchmarks tailored to particular problem domains (e.g., quantum chemistry simulations or optimization problems). For a holistic view, it's best to consider Quantum Volume alongside these other metrics and the specific requirements of your desired quantum algorithms.

The Path to Fault-Tolerant Quantum Computing

The journey from NISQ machines to truly fault-tolerant quantum computers is long and arduous. Fault tolerance requires not just low error rates but also sophisticated quantum error correction codes that can actively detect and correct errors as they occur. While Quantum Volume doesn't directly measure fault tolerance, it represents a critical stepping stone. As Quantum Volume increases, it indicates that quantum hardware is becoming more robust and reliable, making the implementation of effective error correction codes more feasible. Higher QV scores signify that researchers are getting closer to the quality of qubits and gates needed to build truly scalable and error-resilient quantum systems capable of tackling problems currently beyond our imagination.

Actionable Insights for Developers and Researchers

For anyone serious about engaging with quantum computing, understanding Quantum Volume is more than academic; it offers practical guidance for selecting hardware, designing algorithms, and tracking progress.

Evaluating Quantum Hardware

When assessing quantum hardware for your projects, look beyond the advertised qubit count. Here’s what to consider:

- Quantum Volume Score: This is your primary indicator of overall machine quality and capability for general-purpose quantum computation. A higher QV generally means more reliable results for complex circuits.

- Connectivity Map: Examine the qubit connectivity. Does the architecture align with the interaction patterns required by your target algorithms? High connectivity reduces the need for costly swap operations.

- Specific Gate Set and Fidelity: Understand the native gates offered by the hardware and their individual fidelities. Some algorithms might perform better with specific gate sets.

- Noise Profile: Beyond overall error rates, investigate the specific types of noise (e.g., readout errors, gate errors, crosstalk) and their magnitudes. This can inform your choice of error mitigation strategies.

- Software Stack and Compiler: Evaluate the ease of use of the quantum programming environment, the efficiency of the compiler, and the availability of optimization tools. A powerful compiler can make a less-than-perfect hardware more usable.

Practical advice: Match hardware capabilities to algorithmic needs. Don't simply chase the highest qubit count; prioritize the machine that offers the best balance of QV, connectivity, and gate fidelity for your specific computational challenge. For instance, if your algorithm requires all-to-all connectivity, a fully-connected architecture might be preferable, even if it has fewer qubits, than a linear chain with higher QV but limited direct connections.

Optimizing Quantum Circuits

Even with high-QV machines, optimizing your quantum circuits is crucial for success in the NISQ era. Every gate counts:

- Minimize Circuit Depth: Reduce the number of sequential operations. Shorter circuits are less susceptible to decoherence and accumulated errors.

- Reduce Gate Count: Use clever circuit identities and optimization techniques to achieve the same computational outcome with fewer gates.

- Leverage Qubit Connectivity: Design your circuits to take advantage of the native connectivity of the target quantum computer, minimizing the need for swap gates.

- Implement Error Mitigation: Explore techniques like Z-measurement error mitigation, dynamic decoupling, or probabilistic error cancellation to improve result accuracy without full error correction. (For deeper insights, explore resources on quantum error mitigation strategies).

The Future of Quantum Benchmarking

As quantum technology matures, so too will the methods for benchmarking. While Quantum Volume will likely remain a foundational metric, expect to see the rise of more specialized benchmarks:

- Application-Specific Benchmarks: Metrics tailored to specific use cases, such as the accuracy of quantum chemistry simulations, the speed of optimization algorithms, or the performance of quantum machine learning models.

- Resource Estimation Benchmarks: Tools that predict the resources (qubits, gates, coherence time) required to run specific algorithms on future fault-tolerant machines.

- Throughput and Latency Benchmarks: Metrics that assess the speed at which quantum computers can process jobs and deliver results, crucial for integrating quantum capabilities into existing computational workflows.

Staying abreast of these evolving benchmarks will be essential for anyone involved in the quantum ecosystem, ensuring that assessments of quantum hardware remain relevant and comprehensive.

Frequently Asked Questions About Quantum Volume

What is the difference between qubit count and Quantum Volume?

The qubit count simply states the number of quantum bits a machine possesses. It's a raw number that doesn't account for the quality or connectivity of those qubits. Quantum Volume, on the other hand, is a holistic metric that combines qubit count with factors like qubit connectivity, gate fidelity, and coherence time. It provides a more realistic measure of a quantum computer's effective power and its ability to run complex circuits, even if it has fewer qubits than another machine with lower quality qubits.

Why is Quantum Volume so important for quantum computing progress?

Quantum Volume is crucial because it provides a standardized, objective benchmark for comparing different quantum computers and tracking the overall progress of quantum hardware development. In the current NISQ era, where qubits are noisy and prone to errors, QV helps quantify how many high-quality, interconnected qubits can be reliably used in a circuit of a certain depth. It's a vital indicator for researchers and developers to understand the practical limits of current quantum hardware and to guide efforts toward building more robust and capable machines.

Does a higher Quantum Volume guarantee quantum advantage?

No, a higher Quantum Volume does not guarantee quantum advantage. Quantum advantage refers to the point where a quantum computer can solve a specific problem significantly faster or more efficiently than any classical computer. While a higher QV indicates a more capable machine that can run more complex algorithms, achieving quantum advantage depends on many factors, including the specific algorithm, the problem size, and the classical computing resources available for comparison. QV is a general performance metric, not a direct measure of practical problem-solving superiority.

How does error rate impact Quantum Volume?

Error rates have a direct and significant negative impact on Quantum Volume. High error rates, whether from gate operations, readout, or decoherence, mean that quantum information degrades rapidly. As circuits become deeper and involve more qubits, the accumulated errors quickly overwhelm the computation, leading to incorrect results. A machine with high error rates will fail to meet the success probability threshold required by the Quantum Volume benchmark for even moderately sized circuits, resulting in a lower QV score. Therefore, reducing error rates is a primary driver for increasing Quantum Volume.

Are there other benchmarks besides Quantum Volume?

Yes, while Quantum Volume is a prominent benchmark, the quantum computing community is actively developing and using other metrics. Examples include CLOPS (Circuit Layer Operations Per Second), which measures the rate of executing circuit layers, and various application-specific benchmarks designed to assess performance for particular problem domains like quantum chemistry or optimization. These alternative benchmarks often provide complementary insights into different aspects of quantum computer performance, and a comprehensive evaluation typically considers multiple metrics.

0 Komentar